[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

Technology

Introducing Confluent Private Cloud: Cloud-Level Agility for Your Private Infrastructure

Confluent Private Cloud (CPC) is a new software package that extends Confluent’s cloud-native innovations to your private infrastructure. CPC offers an enhanced broker with up to 10x higher throughput and a new Gateway that provides network isolation and central policy enforcement without client...

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

New in Confluent Intelligence: A2A, Multivariate Anomaly Detection, Vector Search for Cosmos DB, Amazon S3 Vectors, and More

Explore new Confluent Intelligence features: A2A integration, multivariate anomaly detection, vector search for Cosmos DB and S3 Vectors, Private Link, and MCP support.

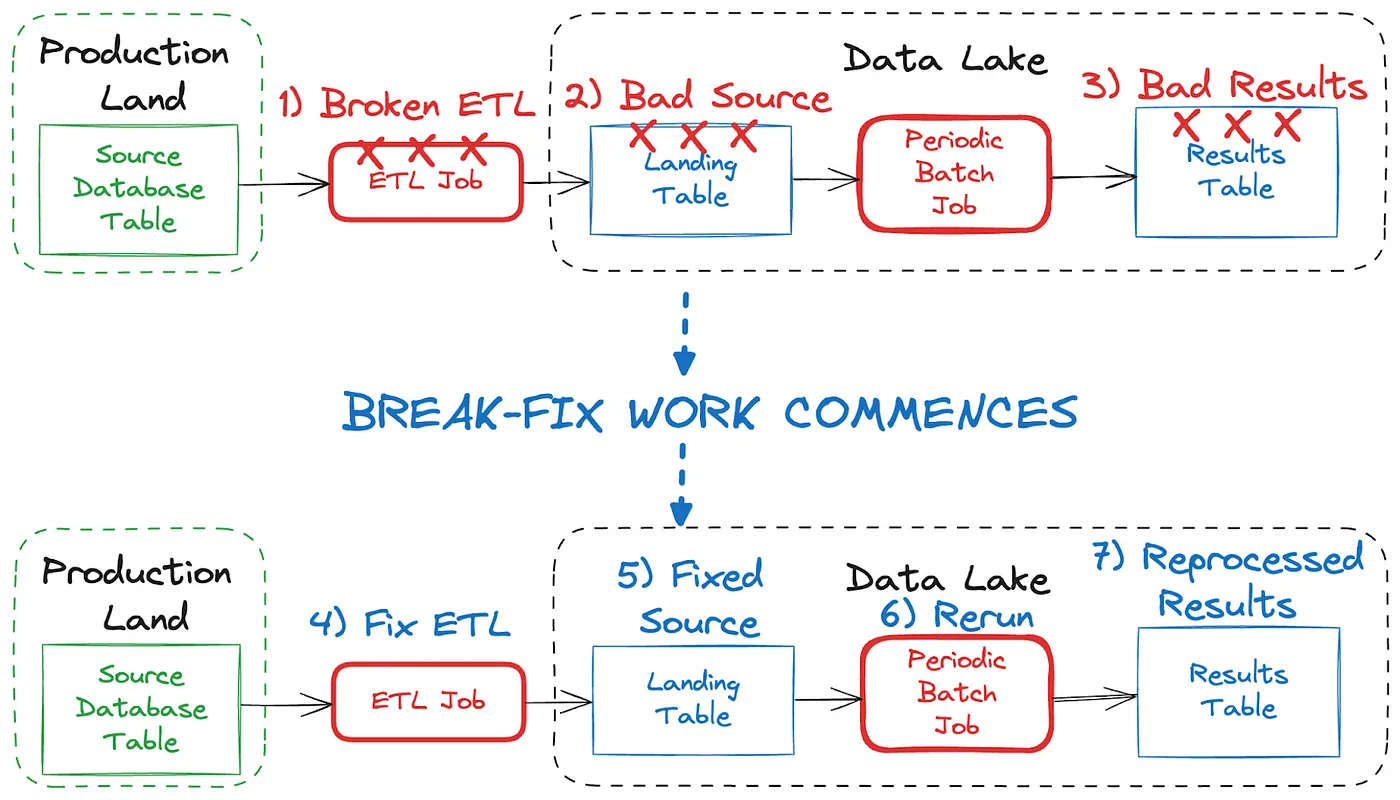

Shift Left: Bad Data in Event Streams, Part 1

At a high level, bad data is data that doesn’t conform to what is expected, and it can cause serious issues and outages for all downstream data users. This blog looks at how bad data may come to be, and how we can deal with it when it comes to event streams.

Your Guide to the Apache Flink® Table API: An In-Depth Exploration

The blog post provides a comprehensive overview of the Flink Table API, demonstrating how it enables developers to express complex data processing logic using Java or Python in a user-friendly manner. It also includes practical examples and guidance, making it a valuable resource for anyone...

Convening With Data Streaming Engineers at Current 2024

We covered so much at Current 2024, from the 138 breakout sessions, lightning talks, and meetups on the expo floor to what happened on the main stage. If you heard any snippets or saw quotes from the Day 2 keynote, then you already know what I told the room: We are all data streaming engineers now.

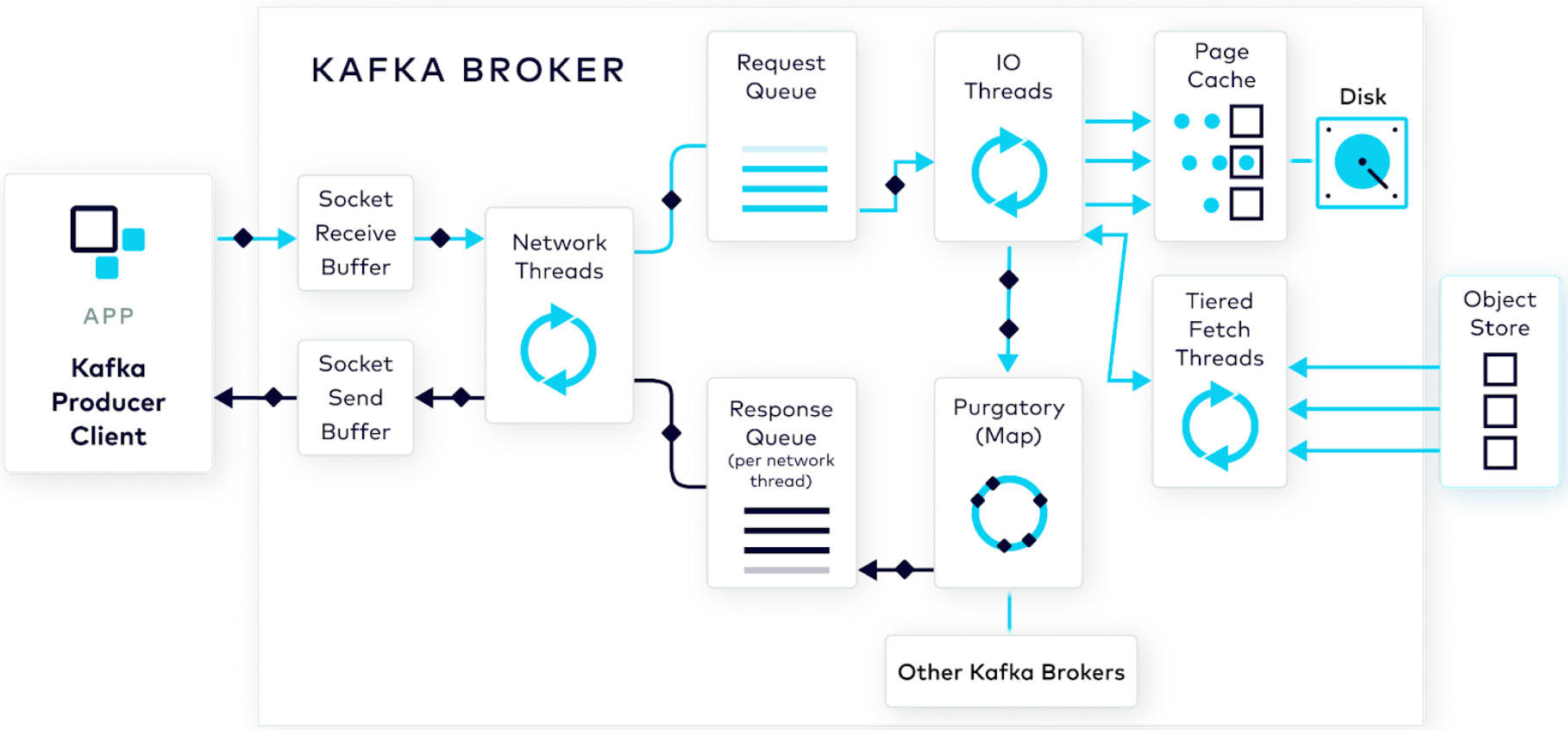

Handling the Producer Request: Kafka Producer and Consumer Internals, Part 2

In this post, the second in the Kafka Producer and Consumer Internals Series, we follow our brave hero—a well-formed produce request—which is on its way to be processed by the broker and have its data stored on the cluster.

Build, Manage, and Monitor Data Streaming Applications, All Within Your Favorite IDE

We’re excited to announce Early Access for Confluent for VS Code. This Visual Studio integration streamlines workflows, accelerates development, and enhances real-time data processing, all in a unified environment. This post shows how to get started, and also lists opportunities to get involved.

New in Confluent Cloud: Making Serverless Flink a Developer's Best Friend, Protecting Sensitive Data, and More

The Q3 Cloud Bundle Launch comes to you from Current 2024, where data streaming industry experts have come together to show you why data streaming is critical today, especially in the age of AI, and how it will become even more important in shaping tomorrow’s businesses...

Producing Messages With a Schema in Confluent Cloud Console

If you are a developer looking for an easier way to test your apps on topics with schemas, this is for you! Now you can easily create a message with a topic schema directly from the Confluent Cloud Console, with built-in validation and error checking.

Let Flink Cook: Mastering Real-Time Retrieval-Augmented Generation (RAG) with Flink

With AI model inference in Flink SQL, Confluent allows you to simplify the development and deployment of RAG-enabled GenAI applications by providing a unified platform for both data processing and AI tasks. Learn how you can use it to build a RAG-enabled Q&A chatbot using real-time airline data.

How Producers Work: Kafka Producer and Consumer Internals, Part 1

The beauty of Kafka as a technology is that it can do a lot with little effort on your part. In effect, it’s a black box. But what if you need to see into the black box to debug something? This post shows what the producer does behind the scenes to help prepare your raw event data for the broker.

Unlock Real-Time Value from DynamoDB Data with Confluent's CDC Source Connector

62% of Confluent Cloud clusters run on AWS. Meanwhile, hundreds of thousands of customers are using DynamoDB. This blog explains how the connector helps customers integrate both platforms together.

5 Years of Confluent Cloud Connectors: Exploring Your Top Connector Picks

Since launching our first cloud connector in 2019, Confluent’s fully managed connectors have handled hundreds of petabytes of data & expanded to include over 80 fully managed connectors, custom connectors, and private networking. Discover popular connectors, SMTs, and use cases on Confluent Cloud...

Spring Into Confluent Cloud With Kotlin—Part 1: Producers and Consumers

Been searching far and wide for examples of Spring Boot with Kotlin integrated with Apache Kafka®? You’ve found it. But not just an example with unstructured data or no schema management. Not here! We’re going all the way with Stream Governance in Confluent Cloud. Let’s get into it.

Introducing the New Confluent Cloud Homepage UI: Enhancing User Experience

We are excited to announce the release of a new Confluent Cloud Homepage UI, inspired by many conversations and features requests from our customer and field teams. In the past, many users bypassed the Homepage as just another click in the way of what they are trying to accomplish. This redesign...

Migrating Kafka to the Cloud: How Skai Went From 90K to 1.8K Topics

Skai completely revamped its interactive, ad-campaign dashboard by adding Apache Kafka and an in-memory database—eventually moving the solution to Confluent Cloud. Once on the Cloud, they devised an ingenious architecture for reducing the number of topics they needed.